|

Then look at the output on S3 with s5cmd in my example the result is three objects written (5MB, 5MB, and 17KB in size). Logstash will automatically pick up this new log data and start writing to S3. > docker run -it -rm mingrammer/flog > logs/testdata.log In a second terminal, I generate synthetic data with the flog tool, writing into the shared “logs/” directory: Starting with the configuration file shown above, customize the fields for your specific FlashBlade environment and place them in $/logs/ directory, which is where Logstash will look for incoming data. To test the flow of data through Logstash to FlashBlade S3, I use the public docker image for Logstash. The maximum amount of data loss is the smaller of “size_file” and “time_file” worth of data. Larger flushes result in more efficient writes and object sizes, but result in a larger window of possible data loss if a node fails. Logstash can trade off efficiency of writing to S3 with the possibility of data loss through the two configuration options “time_file” and “size_file,” which control the frequency of flushing lines to an object.

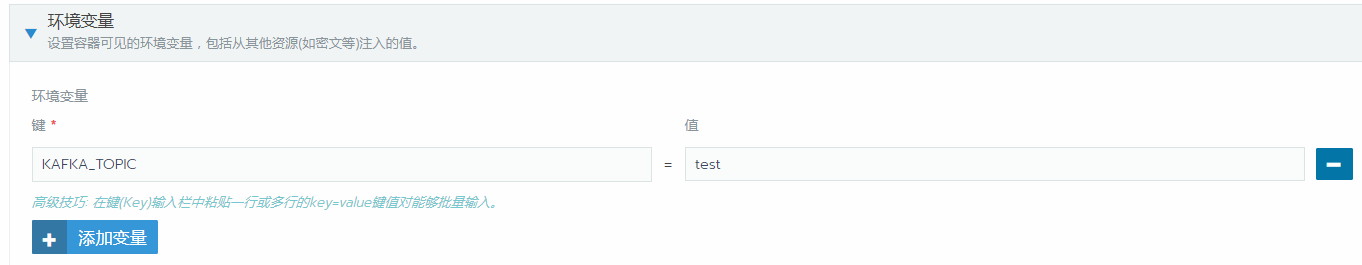

Path-style addressing does not require co-configuration with DNS servers and therefore is simpler in on-premises environments.įor a more secure option, instead of specifying the access/secret key in the pipeline configuration file, they should also be specified as environment variables AWS_ACCESS_KEY and AWS_SECRET_ACCESS_KEY. Note that the force_path_style setting is required configuring a FlashBlade endpoint needs path style addressing instead of virtual host addressing. The input section is a trivial example and should be replaced by your specific input sources (e.g., filebeats). The diagram below illustrates this architecture, which balances expensive indexing and raw data storage.Īn example Logstash config highlights the parts necessary to connect to FlashBlade S3 and send logs to the bucket “logstash,” which should already exist. A second output filter to S3 would keep all log lines in raw (un-indexed) form for ad-hoc analysis and machine learning. This plugin is simple to deploy and does not require additional infrastructure and complexity, such as a Kafka message queue.Ī common use-case is to leverage an existing Logstash system filtering out a small percentage of log lines that are sent to an Elasticsearch cluster. Logstash aggregates and periodically writes objects on S3, which are then available for later analysis. To aggregate logs directly to an object store like FlashBlade, you can use the Logstash S3 output plugin. Log/worker/source=index/year=2020/month=04/day=27/hour=14/worker_ article originally appeared on and has been republished with permission from the author. S3/input.go:386 createEventsFromS3Info failed for logs/worker/source%3Dindex/year%3D2020/month%3D04/day%3D27/hour%3D14/worker_: GetObject request canceled: RequestCanceled: request context canceled T17:18:39.279Z DEBUG s3/input.go:257 handleSQSMessage succeed and returned 1 sets of S3 log info

T17:18:39.278Z DEBUG s3/input.go:257 handleSQSMessage succeed and returned 1 sets of S3 log info T17:18:39.277Z DEBUG s3/input.go:257 handleSQSMessage succeed and returned 1 sets of S3 log info

The below is logs after changing the log to debug.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed